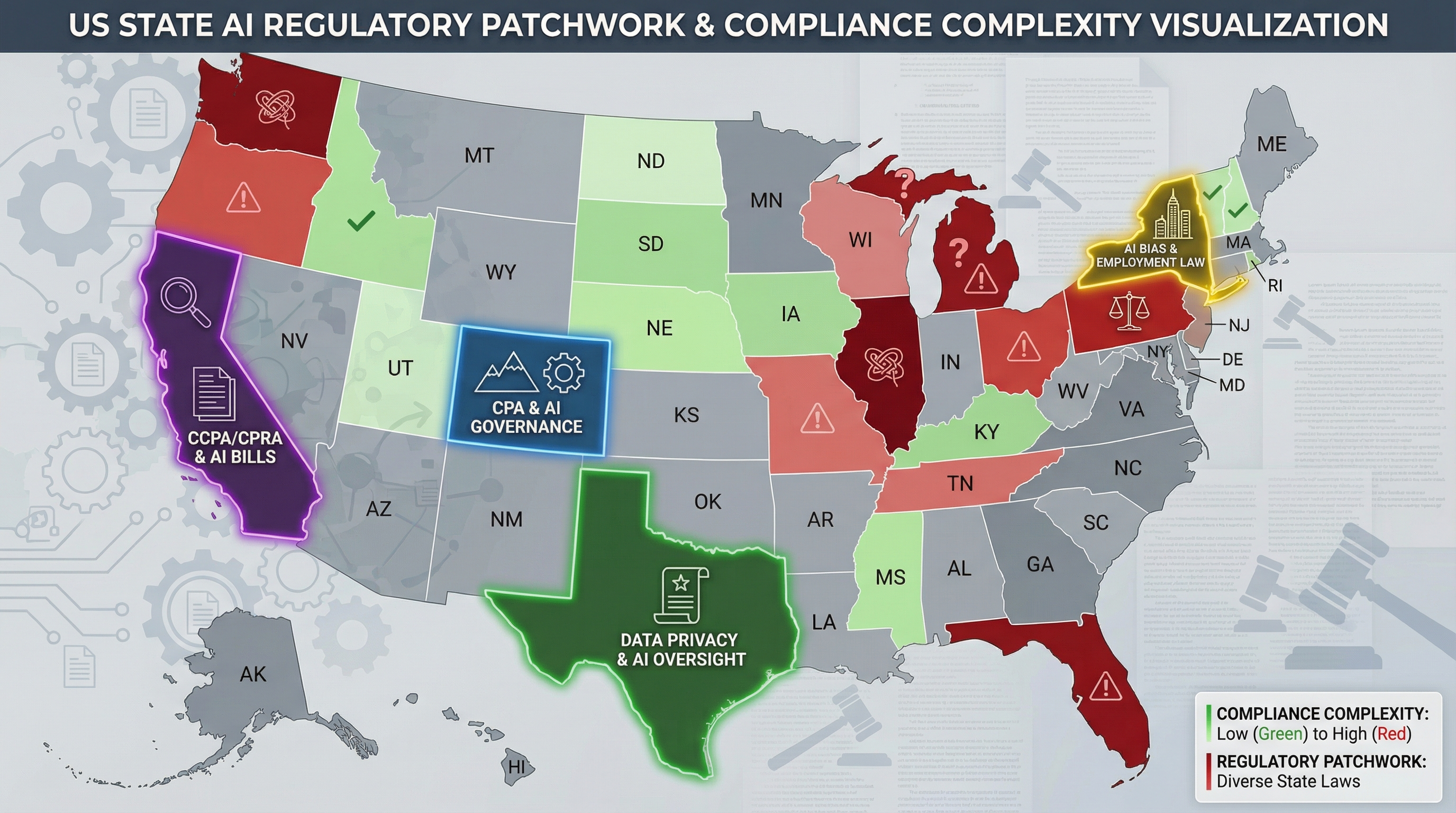

If you're an AI company trying to figure out which laws apply to you, good luck. The White House released a national AI framework in March, but it explicitly preempts nothing. Meanwhile, state laws are taking effect with conflicting requirements, overlapping jurisdictions, and enough complexity to keep an army of compliance lawyers employed for decades.

Welcome to 2026: the year AI regulation went from theoretical to real, from federal to state, and from uniform to chaotic.

Colorado: The Algorithmic Discrimination Watchdog

Colorado's AI Act (SB24-205) took effect February 1, 2026, and it's the most comprehensive state AI law yet. If you develop or deploy "high-risk AI systems" in Colorado, you must exercise "reasonable care" to prevent algorithmic discrimination. That sounds straightforward until you try to define either term.

The law requires documentation, impact assessments, and notifications to consumers when AI makes consequential decisions about them. Violations can result in regulatory action and private lawsuits. For companies operating nationally, Colorado's requirements have effectively become the baseline—it's easier to comply everywhere than to build state-specific systems.

California: The Transparency Champion

California didn't pass one AI law; it passed several. The Transparency in Frontier AI Act (SB 53) requires risk frameworks and incident reporting for large frontier models. The AI Transparency Act (SB 942) mandates watermarking of AI-generated content. Both took effect January 1, 2026.

The watermarking requirement is particularly challenging. If your AI generates images, audio, video, or text, you must embed detectable markers that identify it as AI-generated. The technical standards are still evolving, creating a moving target for compliance.

Texas: The Responsible AI Governance Act

Texas took a different approach. The Responsible AI Governance Act (TRAIGA), effective January 1, 2026, focuses primarily on government use of AI and prohibits specific harmful applications: behavioral manipulation, unlawful discrimination, violence incitement, and deepfake child sexual abuse material.

Texas's law is narrower than Colorado's but includes criminal penalties for the most serious violations. The message: if you use AI for truly harmful purposes in Texas, you might go to prison, not just pay fines.

New York, Oregon, and the Rest

New York passed Assembly Bill A3411B, requiring generative AI systems to display notices when outputs may be inaccurate. Oregon enacted Senate Bill 1546, creating a regulatory regime for "AI companions" that simulate human relationships. Arizona, Georgia, Idaho, Kentucky, Virginia, and Washington all have AI bills moving through their legislatures.

Each state has its own definitions, requirements, enforcement mechanisms, and penalties. Some focus on disclosure; others on discrimination; others on specific use cases like healthcare or hiring. There's no uniformity, no reciprocity, and no clear hierarchy.

The Federal Response: Preempt Nothing

The White House's National AI Legislative Framework, released March 20, 2026, recommends federal standards but explicitly avoids preempting state laws. The framework suggests a uniform national approach would be better than a patchwork, but stops short of mandating one.

This creates the worst of both worlds: federal guidance that adds complexity without simplifying compliance, and state laws that continue to multiply. Companies must navigate federal recommendations, sector-specific regulations from agencies like the FTC and CFPB, and a growing maze of state requirements.

The Compliance Burden

For AI companies, the compliance costs are mounting fast. Legal teams must track fifty different regulatory regimes. Engineering teams must build systems that can satisfy conflicting requirements. Product teams must navigate disclosure obligations that vary by state.

The burden falls hardest on smaller companies. OpenAI and Google can afford armies of lawyers and compliance officers. Startups cannot. The patchwork regulatory environment creates a moat for incumbents who can handle complexity, while raising barriers for new entrants.

Somebody, eventually, will have to rationalize this system. Whether that happens through federal preemption, interstate compacts, or judicial interpretation remains to be seen. Until then, compliance chaos is the new normal.